Building an AI Code Review Agent

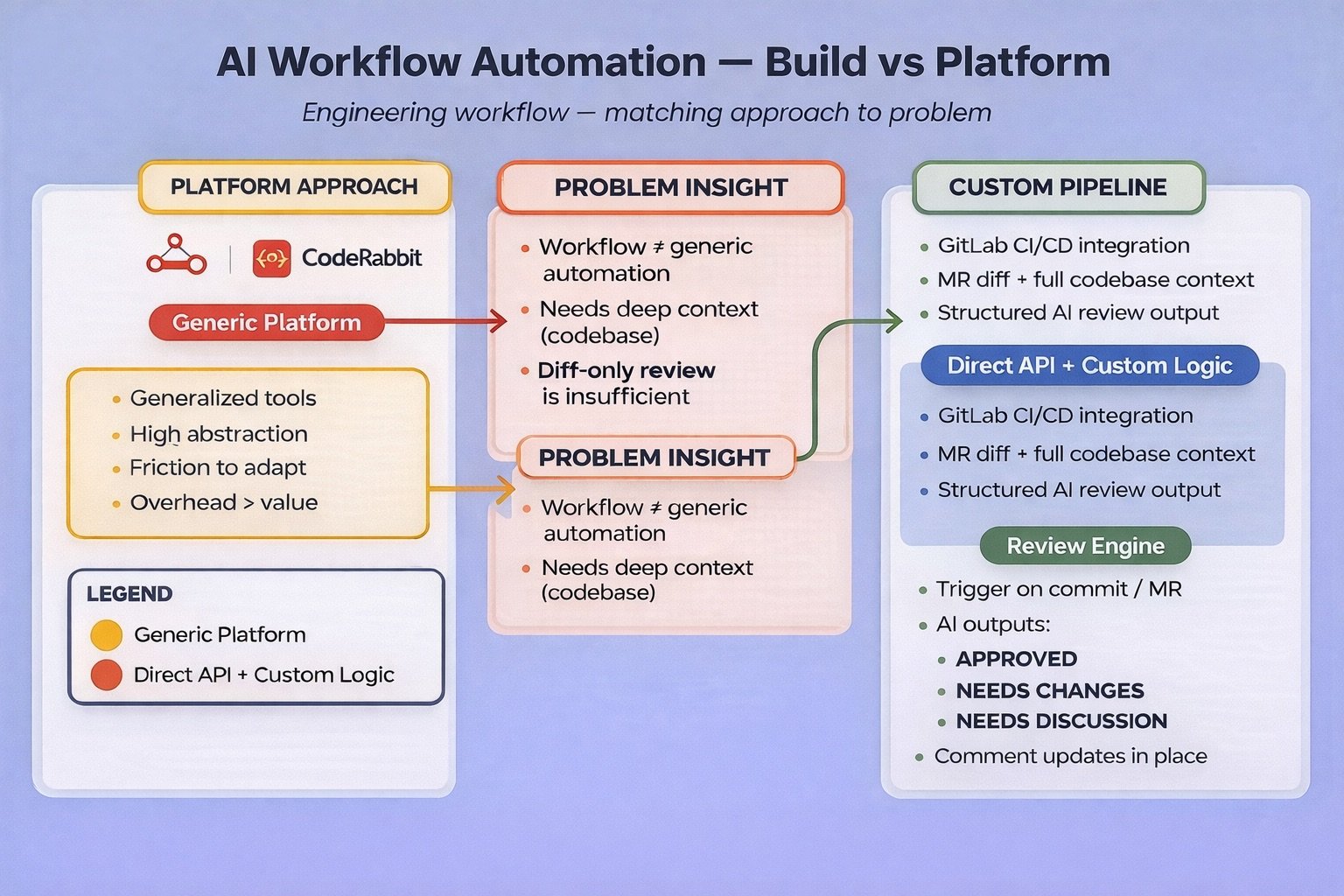

After limited success with generalised automation platforms, four hours of focused building produced an AI code reviewer integrated into GitLab CI/CD with full codebase context that works exactly the way we needed

Automating parts of the development workflow with AI has been on the list for a while. A few weeks ago I finally made time to explore it properly. What followed was equal parts frustration with existing platforms and genuine satisfaction at what a few hours of focused building produced.

What We Tried First

n8n

I have used Zapier before so n8n felt like a natural starting point. I ran out of free credits before I could properly understand how it worked. When I did get it running, I realised — generalised automation platforms like n8n are complicated in ways that do not serve focused use cases well. They do too many things, some of them well and some not. At the end of it, what you have is a builder-step interface to an AI. That felt like a lot of scaffolding for very little.

CodeRabbit

Tried CodeRabbit for automated code reviews specifically. Could not get the head and tail of how to use it in the limited window I had to explore it. Something that should not be difficult was. Moved on.

What We Built Instead

After two false starts I stopped trying to fit our workflow into someone else’s platform and built something directly.

The goal was an AI code reviewer integrated into our GitLab CI/CD pipeline — one that reviews code based on the MR diff but with the entire codebase in context. That second part is important. A review without full codebase context misses too much. Isolated diff review catches syntax and obvious issues but misses architectural concerns, inconsistencies with existing patterns, and violations of conventions established elsewhere in the codebase.

The stack:

- GitLab CI/CD for pipeline integration

- GitLab APIs for MR interaction

@anthropic-ai/claude-codenpm package for the AI review step

The build took approximately four hours end to end — including learning enough of the GitLab API to do what we needed.

How it works:

When a developer pushes to a feature branch or opens an MR, the pipeline triggers a review step. Claude receives the MR diff alongside the full codebase as context and produces a structured review with a clear status:

- APPROVED — ready to merge

- NEEDS CHANGES — specific issues identified that need to be addressed

- NEEDS DISCUSSION — questions or concerns that warrant a conversation before proceeding

The prompt:

Review the merge request. Use 'git diff origin/$CI_MERGE_REQUEST_TARGET_BRANCH_NAME...HEAD' to see the changes. Follow the review guidelines in REVIEW.md. Output ONLY raw JSON with 'status' and 'review' fields as specified in CLAUDE.md. Do not wrap in markdown code fences.

The review guidelines file1 defines severity labels, architecture principles, code quality rules, and the expected output format.

Each review includes sections covering what is working well, what needs attention, and the severity of each issue. The review posts directly as a comment on the MR via the GitLab API. As the MR evolves — as the developer addresses feedback and pushes new commits — the review comment updates in place rather than creating a new comment each time.

We also connected this to direct commits on feature branches, before an MR is even created. Developers get feedback while they are still working, not just at the point of review. Early feedback is significantly cheaper to act on than late feedback.

The reviews have been outstanding. The quality and consistency of feedback has been noticeably better than what a time-pressed human reviewer produces on a first pass — and it frees up review time for the work that genuinely needs human judgment.

What It Costs

After approximately 50 reviews, the total API cost has been around $10. That works out to roughly $0.20 per review — for feedback that would otherwise consume human time on something that can be automated.

Where This Goes Next

The current implementation reviews code against good engineering practice and the existing codebase. The natural next step is reviewing code against intent — specifically, validating that an MR actually does what the corresponding task describes.

Pulling the task description, acceptance criteria and context into the review prompt would give Claude the ability to flag not just how code is written but whether it addresses what was actually asked for. That is a meaningfully different and more useful kind of review.

Further out, the same architecture supports a more ambitious workflow. An agent that reads a task, understands the codebase, makes the required changes, commits them, and raises an MR. A second agent reviews that MR. If APPROVED, it merges and triggers a release.

The infrastructure for this is not far away. The four hours we spent on the code review agent covered most of the foundational work — GitLab API integration, pipeline hooks, AI interaction with codebase context. The incremental steps to get to a fully agentic workflow are smaller than they might appear.

The Broader Observation

Generalised AI automation platforms make sense for certain use cases — connecting disparate SaaS tools, orchestrating workflows across systems that don’t talk to each other. For engineering workflows specifically, the abstractions get in the way.

Building directly — using the AI API, integrating with our existing tools via their APIs, fitting into our existing pipeline — took four hours and produced something that works exactly the way we needed it to. It will also be straightforward to extend in the directions that matter to us.

References

- REVIEW.md — Review guidelines template used in this implementation (download)

- Anthropic — @anthropic-ai/claude-code npm package

- Anthropic — Prompt Engineering Guide

- GitLab — Predefined CI/CD Variables including CI_MERGE_REQUEST_TARGET_BRANCH_NAME

- n8n — Workflow Automation Platform

- CodeRabbit — AI Code Review Tool